We have introduced the concept of algorithmic knowledge in order to

represent not only what agents know or can know, but also

how long they need to know what they can know. Our analyses up

to now can only answer the first question. By means of the systems

presented so far one can infer formulae of the form

![]() , but no definite statements of the form

, but no definite statements of the form ![]() or

or

![]() . However, to decide if an agent can solve a

problem under certain constraints, it is necessary to compute the

exact amount of time he needs to solve that problem.

. However, to decide if an agent can solve a

problem under certain constraints, it is necessary to compute the

exact amount of time he needs to solve that problem.

To answer the the ``How long''-question, a complexity analysis is needed. The underlying idea is simple. The complexities of many reasoning problem classes are well-known and can be computed at a low cost. (Complexities are typically, but not necessarily, measured by functions of the input size.) Since the average computation speed of an agent can be assumed to be constant, the amount of time he needs to solve some problem can be computed on the basis of the complexity function for the problem's class5.4.

Suppose that any formula ![]() in a class

in a class ![]() can be

computed at the cost

can be

computed at the cost ![]() , where

, where ![]() is the length

of

is the length

of ![]() . Let

. Let ![]() be a constant number that measures the

computation speed of an agent

be a constant number that measures the

computation speed of an agent ![]() . If

. If

![]() and if

agent

and if

agent ![]() is able to infer

is able to infer ![]() , then it can be inferred that

, then it can be inferred that ![]() is able to compute

is able to compute ![]() within

within

![]() time

units. That is,

time

units. That is,

![]() can be assumed to be

true. So, by the aid of complexity theory we can obtain epistemic

principles for specific problem classes.

can be assumed to be

true. So, by the aid of complexity theory we can obtain epistemic

principles for specific problem classes.

We shall not make any assumption about the nature of the complexity

measures and develop our logics independent of the complexity theory

in use. We calculate the cost of computing a formula of the language

![]() by way of cost functions, which are

agent-dependent, partial funtions from

by way of cost functions, which are

agent-dependent, partial funtions from

![]() to the

set of natural numbers. That is, a cost function

to the

set of natural numbers. That is, a cost function ![]() is defined for

each agent

is defined for

each agent ![]() . Intuitively,

. Intuitively, ![]() is the number of time units

needed by

is the number of time units

needed by ![]() to decide

to decide ![]() on the basis of all what he

knows. Such a cost funtion is defined on the basis of known complexity

functions for specific problem classes which can be expressed in the

language. Clearly,

on the basis of all what he

knows. Such a cost funtion is defined on the basis of known complexity

functions for specific problem classes which can be expressed in the

language. Clearly, ![]() is defined only for certain sets of formulae,

namely for those formulae whose complexities are known. The agents'

computation speed and the details how the complexity of a certain

formula is measured are encapsulated in the specification of the cost

function.

is defined only for certain sets of formulae,

namely for those formulae whose complexities are known. The agents'

computation speed and the details how the complexity of a certain

formula is measured are encapsulated in the specification of the cost

function.

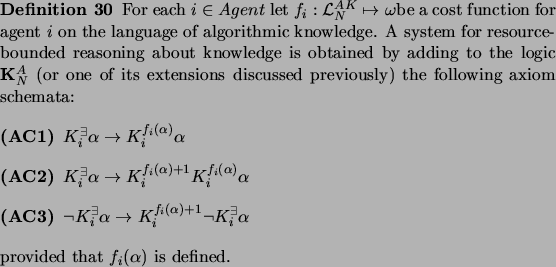

If a cost function ![]() is defined for a formula, then certain

epistemic statements concerning that formula can be made. If the

formula

is defined for a formula, then certain

epistemic statements concerning that formula can be made. If the

formula ![]() can be inferred reliably by the agent

can be inferred reliably by the agent ![]() , then the

amount of time needed to infer it is

, then the

amount of time needed to infer it is ![]() , so

, so

![]() can be assumed to be true. Whether or not

can be assumed to be true. Whether or not

![]() can be inferred reliably by

can be inferred reliably by ![]() , the introspective knowledge

of that can be established after

, the introspective knowledge

of that can be established after ![]() time units, because

his computation of

time units, because

his computation of ![]() will return a positive or negative answer

after at most

will return a positive or negative answer

after at most ![]() time units. Therefore,

time units. Therefore,

![]() and

and

![]() are valid. Those

axioms will be added to the systems of algorithmic knowledge examined

earlier to make more powerful logics for resource-bounded reasoning.

are valid. Those

axioms will be added to the systems of algorithmic knowledge examined

earlier to make more powerful logics for resource-bounded reasoning.

The complexity analysis makes it possible to prove many unconditional,

definite epistemic sentences of the form ![]() . Let

. Let ![]() be a propositional tautology and let

be a propositional tautology and let ![]() be

defined. Applying the rule (NEC

be

defined. Applying the rule (NEC![]() ) yields the theorem

) yields the theorem

![]() . Hence,

. Hence,

![]() can be

derived, by (AC1).

can be

derived, by (AC1).

The main task in specifying a system of algorithmic knowledge with

complexity analysis is to define the cost functions for the language

![]() . Let us consider how such cost functions can be

constructed. We have argued in section 5.2.1 that if

. Let us consider how such cost functions can be

constructed. We have argued in section 5.2.1 that if

![]() is provable then

is provable then

![]() can be inferred. We

have posed the question if natural number

can be inferred. We

have posed the question if natural number ![]() can be determined such

that the stronger sentence

can be determined such

that the stronger sentence ![]() can be assumed as a

postulate. It is not yet known whether or not the provability problem

for K

can be assumed as a

postulate. It is not yet known whether or not the provability problem

for K![]() is decidable, so we restrict our attention to

certain subclasses. Let

is decidable, so we restrict our attention to

certain subclasses. Let ![]() be an objective formula. Then it can

be decided in time

be an objective formula. Then it can

be decided in time

![]() , as we know from complexity

theory. If an agent is able to recognize objective formulae and to

select a special procedure to compute them, he can derive reliably

each objective tautology

, as we know from complexity

theory. If an agent is able to recognize objective formulae and to

select a special procedure to compute them, he can derive reliably

each objective tautology ![]() in a time proportional to

in a time proportional to

![]() .

.

Interestingly, the previous analysis can be used by an agent within

the system in order to reason about himself or about other agents,

provided that he has a built-in mechanism to calculate the complexity

of reasoning problems. (Such a mechanism can be adopted easily by an

agent.) Assume that an agent ![]() tries to find out how long agent

tries to find out how long agent ![]() will need to infer a formula

will need to infer a formula ![]() if he chooses to. It is

plausible to assume that

if he chooses to. It is

plausible to assume that ![]() can recognize relatively easily that

can recognize relatively easily that

![]() belongs to the class of objective formulae, so he can reason

about agent

belongs to the class of objective formulae, so he can reason

about agent ![]() exactly like we did before to find out that the time

agent

exactly like we did before to find out that the time

agent ![]() needs is proportional to

needs is proportional to

![]() . Generally,

. Generally, ![]() does not know

does not know ![]() 's computation speed. However, he may obtain that

information from external sources or through his own observations. If

's computation speed. However, he may obtain that

information from external sources or through his own observations. If

![]() knows the computation speed of

knows the computation speed of ![]() , he will be able to compute the

amount of time needed by

, he will be able to compute the

amount of time needed by ![]() to infer

to infer ![]() . In other words, he can

calculate

. In other words, he can

calculate ![]() for any objective formula

for any objective formula ![]() . But to

estimate the time

. But to

estimate the time ![]() would need to derive

would need to derive ![]() , agent

, agent ![]() does

not have to actually derive it. He has only to recognize the class

that

does

not have to actually derive it. He has only to recognize the class

that ![]() belongs to and then to calculate the complexity of

belongs to and then to calculate the complexity of

![]() , which can be accomplished in linear time. So

, which can be accomplished in linear time. So

![]() is a

plausible postulate, where

is a

plausible postulate, where ![]() is the computation speed of

is the computation speed of ![]() .

.

Hence, the definition of the complexity function for ![]() may

include the following clauses: for all objective formulae

may

include the following clauses: for all objective formulae ![]()

The complexity of the decision procedures for normal modal logic has

been investigated extensively ([HM92].) It has been shown (cf. theorem 28) that modal epistemic logic can be embedded

faithfully to our systems of algorithmic knowledge, i.e., there is a

fragment of the language

![]() which can be

translated one-to-one to modal logic. Consequently, the complexity

results obtained for normal modal logic can be applied to determine

the cost of solving problems which can be formalized in that

fragment. In that way the cost function

which can be

translated one-to-one to modal logic. Consequently, the complexity

results obtained for normal modal logic can be applied to determine

the cost of solving problems which can be formalized in that

fragment. In that way the cost function ![]() can be specified for

that fragment.

can be specified for

that fragment.