For modeling knowledge with time constraints we need to use some model

of time measurement. As remarked previously, we shall deal with

sentences of the form ``if asked about ![]() , agent

, agent ![]() can derive

reliably the answer within

can derive

reliably the answer within ![]() time units''. For simplicity we shall

use natural numbers to measure time, i.e., we assume that

time units''. For simplicity we shall

use natural numbers to measure time, i.e., we assume that ![]() is a

natural number. So the language we consider should contain formulae of

the form

is a

natural number. So the language we consider should contain formulae of

the form ![]() where

where ![]() is the name of an agent,

is the name of an agent, ![]() is a

natural number, and

is a

natural number, and ![]() is a formula. The formula

is a formula. The formula ![]() can be read ``agent

can be read ``agent ![]() knows

knows ![]() within

within ![]() units of time'' and

is interpreted: ``if agent

units of time'' and

is interpreted: ``if agent ![]() chooses to derive

chooses to derive ![]() from his

current knowledge, then after at most

from his

current knowledge, then after at most ![]() time units he will

succeed'', or alternatively, ``if asked about

time units he will

succeed'', or alternatively, ``if asked about ![]() ,

, ![]() is able to

derive reliably the answer 'yes' within

is able to

derive reliably the answer 'yes' within ![]() units of time''. That is,

we require not only that agent

units of time''. That is,

we require not only that agent ![]() have at least one procedure to

compute

have at least one procedure to

compute ![]() , but also that

, but also that ![]() be able to choose the correct

procedure leading to

be able to choose the correct

procedure leading to ![]() under the given time constraint, namely,

to arrive at the conclusion

under the given time constraint, namely,

to arrive at the conclusion ![]() after at most

after at most ![]() time

units5.2.

time

units5.2.

Sometimes it can be assumed safely that an agent is able to infer some

fact, but it is not possible to estimate accurately how long the

computation would take. For example, the complexity of the employed

algorithm or the agent's inference strategy may not be known

completely. To deal with such cases we introduce a sort of

quantification into the language. We consider statements of the form

``there is a number ![]() such that the agent

such that the agent ![]() is able to compute the

fact

is able to compute the

fact ![]() within

within ![]() units of time''. Such a statement is

formalized by the formula

units of time''. Such a statement is

formalized by the formula

![]() , which can be read:

``agent

, which can be read:

``agent ![]() can infer

can infer ![]() reliably in finite time''. That is,

when presented with the fact

reliably in finite time''. That is,

when presented with the fact ![]() , the agent is able to choose a

suitable algorithm which runs on

, the agent is able to choose a

suitable algorithm which runs on ![]() and terminates with the

(correct) answer after finitely many steps.

and terminates with the

(correct) answer after finitely many steps.

The formula ![]() entails the following facts about the

agent's

entails the following facts about the

agent's ![]() information state. First, the formula

information state. First, the formula ![]() follows

(with respect to the logic used by

follows

(with respect to the logic used by ![]() ) from all what

) from all what ![]() knows. Second, agent

knows. Second, agent ![]() has an algorithm to establish that connection

and which he is able to select to use when he chooses to compute

has an algorithm to establish that connection

and which he is able to select to use when he chooses to compute

![]() . Third, that computation takes at most

. Third, that computation takes at most ![]() time unit. The

formula

time unit. The

formula

![]() is weaker in the sense that it does not

tell exactly how long the computation of

is weaker in the sense that it does not

tell exactly how long the computation of ![]() will take. It only

says that the computation is guaranteed to terminate.

will take. It only

says that the computation is guaranteed to terminate.

Our notion of knowledge can be called algorithmic knowledge: knowledge

is tied up with an algorithm to establish it. It represents not only

factual knowledge but also a kind of procedural knowledge. The

concepts of explicit and implicit knowledge can be regarded naturally

as two special cases of algorithmic knowledge. Explicit knowledge can

be defined as ![]() , which says that agent

, which says that agent ![]() has immediate

access to the information

has immediate

access to the information ![]() and can act on it. Implicit

knowledge is defined as

and can act on it. Implicit

knowledge is defined as

![]() : agent

: agent ![]() knows

knows

![]() implicitly if he is able to compute

implicitly if he is able to compute ![]() when

required. This is, however, not the only way to define a useful notion

of implicit knowledge. For instance, one can stipulate that an agent

knows a fact implicitly it can be inferred from his explicit knowledge

(with respect to some inference system).

when

required. This is, however, not the only way to define a useful notion

of implicit knowledge. For instance, one can stipulate that an agent

knows a fact implicitly it can be inferred from his explicit knowledge

(with respect to some inference system).

Our use of the term ``algorithmic knowledge'' as explained above

should not be confused with other usages found elsewhere in the

literature. Binmore and Shin ([BS92]) use the term to

emphasize the connection between knowledge and provability (see also

[SW94]). In their terminology, an agent's algorithmic

knowledge is whatever the agent can infer using a Turing machine. The

properties of this concept are studied and related to properties of

provability concepts. Halpern, Moses, and Vardi ([HMV94]) also

define algorithmic knowledge in terms of computation: an agent is said

to know a fact at a certain state if at that state he can compute that

he knows that fact. That is, given his local data, his local algorithm

terminates and outputs the answer ``Yes'' when presented with the

fact. Clearly, these concepts describe knowledge that is not

necessarily available immediately to the agent. They are in spirit

related to our concept of implicit knowledge, defined above as

![]() . Hence, they both fall under the category of

implicit knowledge in our classification of chapter 2:

they characterize a kind of information that is implicitly available

to an agent but must be computed and made explicit before the agent

can act upon.

. Hence, they both fall under the category of

implicit knowledge in our classification of chapter 2:

they characterize a kind of information that is implicitly available

to an agent but must be computed and made explicit before the agent

can act upon.

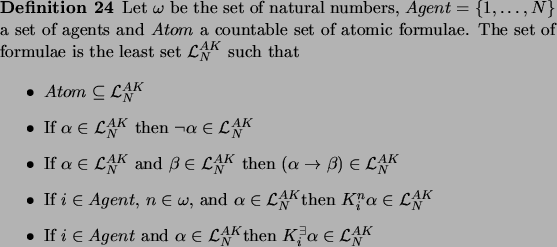

Formally, the language

![]() of algorithmic knowledge

for

of algorithmic knowledge

for ![]() agents is defined as follows:

agents is defined as follows:

The rationality of agents is expressed through two capacities: first,

the ability to draw logical consequences from what is already known,

and second, the ability to compute the complexities of certain

reasoning problems in order to infer when something can be known. It

should be stressed that these two capacities are implementable. Agents

have been frequently supplied with inference machines which allow them

to infer new information from what has been known. The complexities of

many problems can be computed at a low cost from their syntactic

structures alone, so it is not hard to build into agents the

capability to recognize the structure of a problem and estimate the

cost to solve it. It turns out that we can develop quite rich theories

of the algorithmic notion of knowledge we have introduced. To develop

logics of algorithmic knowledge we try to establish logical

relationships among the formulae of the language

![]() . This is done by developing the framework for

reasoning about explicit knowledge (chapter 4) a step

further.

. This is done by developing the framework for

reasoning about explicit knowledge (chapter 4) a step

further.