Another solution to the LOP consists in introducing a new operator of

awareness into the language and to require that belief include

awareness ([FH88].) The underlying intuition is that agents need

to be aware of some concept before they can have beliefs about it: one

cannot know something one is completely unaware of. On the other hand,

if an agent is aware of a formula ![]() and implicitly knows

and implicitly knows

![]() , then he knows

, then he knows ![]() explicitly. The notion of awareness

is left unspecified. Some possible interpretations of ``agent

explicitly. The notion of awareness

is left unspecified. Some possible interpretations of ``agent ![]() is

aware of

is

aware of ![]() '' are: ``

'' are: ``![]() is familiar with all the propositions

mentioned in

is familiar with all the propositions

mentioned in ![]() '', ``

'', ``![]() is able to figure out the truth of

is able to figure out the truth of

![]() '', or ``

'', or ``![]() is able to compute the truth of

is able to compute the truth of ![]() within

time

within

time ![]() .''

.''

For better comparison with other approaches, my presentation of the

awareness framework will not follow the original definition

([FH88]) in details. The main intuitions are retained,

however. In particular, there are no modal operators for implicit

knowledge and awareness. The knowledge operators of the language

![]() are now interpreted as explicit knowledge and

will be evaluated accordingly in the definition of models.

are now interpreted as explicit knowledge and

will be evaluated accordingly in the definition of models.

![\begin{definition}

% latex2html id marker 1752

[Awareness structures]

\par An aw...

...in S$, if $sR_it$\ then $M,t\models \alpha$\par\end{itemize}\par\end{definition}](img130.png)

Intuitively,

![]() is the set of formulae that agent

is the set of formulae that agent ![]() is aware of at state

is aware of at state ![]() , and the relations

, and the relations

![]() are used

to model implicit knowledge. The set of formulae that an agent is

aware of can be arbitrary and needs not be closed under any

law. Moreover, there is no relationship between (implicit) knowledge

and awareness at all: the function

are used

to model implicit knowledge. The set of formulae that an agent is

aware of can be arbitrary and needs not be closed under any

law. Moreover, there is no relationship between (implicit) knowledge

and awareness at all: the function ![]() and the relation

and the relation

![]() are completely independent. Since explicit knowledge is defined

as implicit knowledge plus awareness, it is obvious that if an agent

is aware of all formulae of the language then explicit knowledge

reduces to implicit knowledge.

are completely independent. Since explicit knowledge is defined

as implicit knowledge plus awareness, it is obvious that if an agent

is aware of all formulae of the language then explicit knowledge

reduces to implicit knowledge.

Because it is possible that an agent is aware of some sentence but he is not aware of its logical consequences or its equivalent sentences, the theorems and inference rules of modal epistemic systems do not hold in general. So the forms of logical omniscience discussed in chapter 2 are avoided.

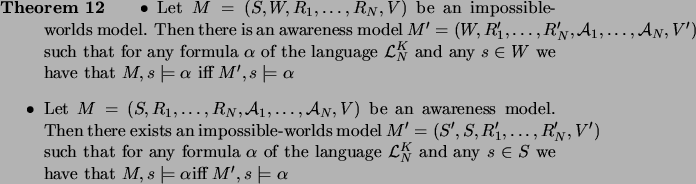

That the awareness approach is able to model non-omniscient agents can be seen in another way. We have seen earlier that the impossible-worlds approach avoids all forms of logical omniscience. The following theorem shows that although the intuitions are quite different, the impossible-worlds approach and the awareness approach are equivalent in a precise sense (cf. [Wan90], [Thi93], [FHMV95]).

As an immediate consequence of this theorem, the awareness framework

also solves all forms of the LOP: if an undesirable property can be

falsified in an impossible-worlds model, then it can also be falsified

in an awareness model. In fact, it can be seen easily that the set of

![]() -formulae which are valid wrt all awareness

models consists of exactly the instances of propositional

tautologies. In other words, no genuine epistemic statement is valid

with respect to the class of all awareness models.

-formulae which are valid wrt all awareness

models consists of exactly the instances of propositional

tautologies. In other words, no genuine epistemic statement is valid

with respect to the class of all awareness models.

So far the concept of awareness has been left unspecified, so no meaningful restrictions can be placed on the set of formulae that an agent is aware of. Once a concrete interpretation has been fixed, some closure properties can be added to the awareness function to capture certain types of ``awareness''.

For example, if we consider a computer program that never computes the

truth of a formula unless it has computed the truth of all its

subformulae, then we may assume that awareness is closed under

subformulae, i.e., if

![]() and

and ![]() is a

subformula of

is a

subformula of ![]() then

then

![]() . This

assumption may seem innocuous at first, but it turns out to have a

rather strong impact on the properties of explicit knowledge. It can

be shown easily that if awareness is closed under subformulae then an

agent's knowledge is closed under material implication, i.e., the

schema (K) is valid. In general, whenever

. This

assumption may seem innocuous at first, but it turns out to have a

rather strong impact on the properties of explicit knowledge. It can

be shown easily that if awareness is closed under subformulae then an

agent's knowledge is closed under material implication, i.e., the

schema (K) is valid. In general, whenever ![]() follows

logically from

follows

logically from

![]() and

and ![]() is a subformula of

one of

is a subformula of

one of

![]() , then

, then ![]() follows from

follows from

![]() , for any agent

, for any agent ![]() .

.

Another possible closure property for awareness is that agent might be

aware of only a subset ![]() of the atomic formulae. In this case one

could assume that

of the atomic formulae. In this case one

could assume that

![]() consists of exactly those

formulae that are built up from the atomic formulae in

consists of exactly those

formulae that are built up from the atomic formulae in ![]() . Under this

assumption some forms of logical omniscience are avoided, e.g.,

knowledge of valid formulae or closure under logical

implication. However, all forms of the LOP occur again when attention

is restricted to the sublanguage generated by

. Under this

assumption some forms of logical omniscience are avoided, e.g.,

knowledge of valid formulae or closure under logical

implication. However, all forms of the LOP occur again when attention

is restricted to the sublanguage generated by ![]() .

.